illustration: bing.com/create

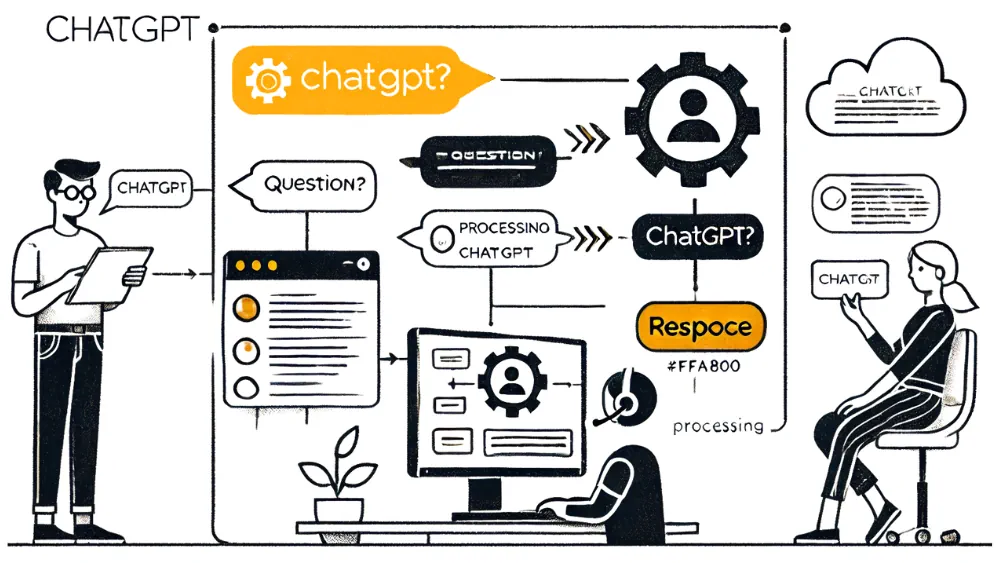

illustration: bing.com/createLarge language models (LLMs), like ChatGPT and Google Gemini, are among AI’s most impressive achievements. In recent years, artificial intelligence (AI) has revolutionized many areas of our lives. Although their function may seem magical, it’s actually based on solid mathematical and computer science foundations.

What Are Large Language Models and How Do They Work?

LLMs are language models trained on vast amounts of text data, enabling them to understand and generate human language naturally. These models use neural network architectures that mimic how the human brain works.

- Training: Building an LLM begins with gathering vast amounts of text data. This includes articles, books, websites, and even chat conversations. The model is then trained on this data, learning to recognize patterns and relationships between words.

- Text Generation: When we ask an LLM a question or give it a command, it analyzes the input text and tries to understand its meaning. Then, it generates a response by selecting words and phrases that are most probable in the given context.

- Reinforcement Learning: LLMs are continually improved through reinforcement learning. This means the model receives feedback from people on the quality of the text it generates, helping it to improve its skills and produce better responses.

Training data is like fuel for large language models (LLMs). It enables models to learn patterns, relationships, and contexts that allow them to generate coherent and meaningful text. The training data for ChatGPT, Google Gemini, and other LLMs is incredibly diverse, covering nearly all forms of text available on the internet: articles, books, websites, blog posts, comments, news, and even source code. The quality of training data is crucial to the quality of the text generated.

Architecture of Large Language Models

Large language models are incredibly complex systems that can be simplified to advanced "writing machines." However, unlike their mechanical predecessors, LLMs can "understand" language and generate coherent text.

The foundation of every LLM is neural networks. These are mathematical models inspired by the structure of the human brain. They consist of numerous interconnected artificial neurons that process information. In LLMs, these neurons process words and phrases.

How does it work in practice? When we input a text prompt in ChatGPT or Google Gemini, the model transforms it into a sequence of numbers representing individual words. The data then passes through successive layers of the neural network. In each layer, an attention mechanism allows the model to focus on different parts of the input text, enabling it to understand the context. Finally, the model generates a sequence of numbers, which is then converted back into text.

Limitations of AI Content Generators

While LLMs are advanced models, they also have limitations. Understanding these is key to using the technology responsibly.

- Lack of True Understanding: LLMs do not have a true understanding of the world and often struggle with fully grasping context, especially with complex or unusual queries. The generated text is based on patterns learned from training data.

- Possibility of Generating False Information: The model can produce text that is incorrect or misleading.

- No Consciousness: LLMs do not have consciousness or personal opinions. The text they generate reflects only the data on which they were trained.

ChatGPT, Google Gemini, and other AI content generators hold enormous potential but also raise ethical questions. One of the biggest concerns regarding the development of these systems is their potential use in generating misinformation, fake news, and influencing public opinion.

Challenges and the Future of LLMs

LLMs are continuously evolving, and their capabilities are expected to grow. Large language models will likely improve at mimicking human conversation and handling increasingly complex tasks. However, it’s essential to remember that LLMs are tools that should be used thoughtfully. Their development faces several challenges.

- Resource Consumption: Training and running LLMs require vast computational power, leading to high costs and a negative environmental impact.

- Bias: LLMs are trained on massive datasets that may contain hidden biases, leading to text generation that reinforces stereotypes and discrimination.

- Hallucinations: LLMs can generate text that sounds convincing but is entirely false. This phenomenon, known as hallucination, is a major problem associated with LLMs.

- Privacy: Collecting large amounts of text data to train LLMs raises significant privacy concerns.

- Interpretability: The operation of LLMs is very difficult for humans to understand, complicating error diagnosis and model improvement.

Researchers worldwide are working to address these issues. Current research focuses on improving energy efficiency, addressing bias, enhancing reliability, preventing hallucinations, and ensuring privacy protection.

COMMERCIAL BREAK

New articles in section Skills and knowledge

Artificial intelligence in film and tv production. McKinsey report

Krzysztof Fiedorek

Global spending on video content has reached $180 billion, and the average viewer consumes it for 7.5 hours a day. Streaming is growing by 13%, while traditional television is losing 4% annually. As much as 84% of the US market is controlled by the seven largest players. Additionally, AI technology is reshuffling the market.

War reporter in the new reality. Evolving techniques, same purpose

KFi

What happens when war breaks out just across the border and journalists aren't ready? Polish reporters faced that question after Russia invaded Ukraine in 2022. Lacking training, they improvised: blurred details, hid names, and balanced trauma with truth.

A heuristic trap in media coverage. How loud headlines boost fear

Bartłomiej Dwornik

A negative message that rests on emotion lifts the sense of threat by 57%. Why do reports of a plane crash drive investors away from airline shares? Why do flood stories spark worry about the next deluge? The pattern is irrational yet clear and proven.

See articles on a similar topic:

It's Easier to Lie and Swear in Foreign Language. Here is Scientific Proof

Ewelina Krajczyńska-Wujec

A decision made based on data presented in a learned foreign language may be different than if you made it based on data in your native language. Language changes the intensity of felt emotions, and it affects the ability to analyse problems and choose solutions, according to research by Rafał Muda, PhD.

Sarcasm in Communication. A Study by INSEAD Researchers

Krzysztof Fiedorek

Sarcasm can be a valuable tool in interpersonal communication, but its effectiveness depends on the context and the relationship between the sender and the receiver. Researchers at INSEAD have shown that well-utilized sarcasm can be a powerful asset in business language and advertising.

Preschoolers Expose Hypocrites. Findings from SWPS University

ekr/ bar/

Even preschool children are able to recognize hypocrites, whom they rate worse than other people who break the rules, researchers from SWPS University in Poland demonstrate. Caregivers should therefore pay attention to whether their actions are consistent with their declarations, because children are careful observers of moral integrity.

Visual tricks. How to influence people with color, shape and composition

Bartłomiej Dwornik

The human brain supposedly processes images up to 60,000 times faster than words. Bright colors catch the eye more - but only under certain conditions. Few people can resist the "Apache Method," and a bearded man sells better. Here are some tricks for graphical-optical mind hacking.