illustration: DALL-E

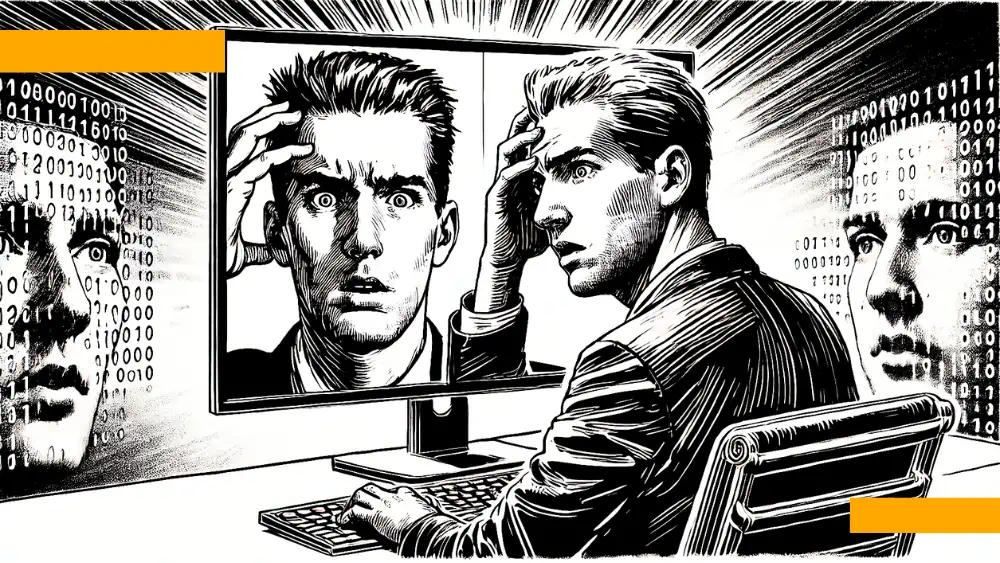

illustration: DALL-EResearchers Qiang Liu, Lin Wang, and Mengyu Luo from the University of Science and Technology in Shanghai set out to examine how deepfake technology affects the perception of information and users` trust in media. In their article When Seeing Is Not Believing: Self-efficacy and Cynicism in the Era of Intelligent Media, published in Humanities and Social Sciences Communications, they conducted two experiments involving 1,826 participants, analyzing how cynicism toward information changes depending on users` ability to recognize AI-generated content.

The experiments revealed that:

- Individuals with low self-assessment in recognizing AI content are more likely to question the authenticity of news that is personally significant to them.

- Low-risk content paradoxically raises more skepticism than content considered high-risk.

- Users who repeatedly struggle to assess the authenticity of deepfakes lose confidence in their abilities and abandon efforts to verify information.

This phenomenon leads to the so-called "apathetic reality," where audiences choose indifference over critical thinking regarding media consumption.

Why Are We Losing Trust in Media?

The growing cynicism toward AI-generated information is not just a technological issue but also a matter of how audiences process content. Research suggests that users engage more in content analysis when they feel the topic directly affects them.

Data shows that:

| Factor | Impact on AI Self-Assessment | Impact on Cynicism |

|---|---|---|

| High content relevance | Increased confidence | Reduced cynicism |

| Low content relevance | Decreased confidence | Increased cynicism |

| High-risk news | Greater inclination to verify | Lower level of cynicism |

| Low-risk news | Less interest in verification | Higher level of cynicism |

The study`s authors highlight the effect of cognitive fatigue. When users repeatedly encounter situations where they cannot distinguish deepfakes from real content, they stop making an effort to check. Ultimately, instead of verifying authenticity, they begin treating all information as potentially unreliable.

What Can We Do?

Experts emphasize that the solution lies not only in developing deepfake detection technologies but also in shaping new media literacy models.

- Social media platforms should implement more advanced mechanisms for labeling AI-generated content.

- Users should be trained not only in recognizing fake news but also in consciously processing information in an era of informational chaos.

- Journalists should make greater use of content verification tools and build communication strategies based on source transparency.

Liu, Wang, and Luo`s study found that even a small increase in users` self-confidence regarding AI leads to a significant reduction in cynicism and greater engagement in content analysis. This means that education and tools supporting content verification can help audiences regain control over what they consider true.

The Future of Trust in Information

Deepfake news is a challenge we will face for years to come. In the age of artificial intelligence, it is not just technology that determines what we believe but also our ability to recognize and critically analyze content. If we do not begin developing skills to navigate the world of synthetic information, we may find ourselves in a reality where we cannot even trust what we see with our own eyes.

* * *

Article by Liu, Q., Wang, L., Luo, M. (2025) When Seeing Is Not Believing: Self-efficacy and Cynicism in the Era of Intelligent Media, published in Nature Humanities and Social Sciences Communications, available at

https://www.nature.com/articles/s41599-025-04594-5

COMMERCIAL BREAK

New articles in section Media industry

Vulnerable to disinformation. Study of fake news in social media

KFi, azk/ bst/ amac/

As many as 58 percent of Generation Z individuals are unable to recognize fake news in social media. Among those over 65, this figure stands at 29 percent - according to a study published in Poland by NASK and the Praktycy.eu association.

Radio in Poland 2025. Analysis of listenership and listener behavior

Krzysztof Fiedorek

Radio attracts 17.3 million listeners in Poland every day, who spend over four hours with their receivers. Interestingly, as much as 86 percent of station time is listened to via traditional FM waves. Despite digitalization, the internet accounts for only 12.5 percent of the listenership share.

Tags, hashtags and links in video descriptions. Youtube SEO after Gemini AI update [ANALYSIS]

BARD

Once, positioning a video on Youtube was simple. It was enough to stuff the description with keywords and wait for results. Those days are not coming back. In 2026, the algorithm is no longer a simple search engine that connects dots. It is the powerful Gemini AI artificial intelligence that understands your video better than you do.

See articles on a similar topic:

Decline in Trust in Media. Analysis of the Reuters Digital News Report 2024

Krzysztof Fiedorek

The “Digital News Report 2024” by the Reuters Institute for the Study of Journalism highlights alarming trends concerning the declining interest in news and decreasing trust in media. These changes are not temporary but have become a long-term trend.

Dead internet theory is a fact. Bots now outnumber people online

Krzysztof Fiedorek

Already 51% of global internet traffic is generated by bots, not people. As many as two-thirds of accounts on X are likely bots, and on review platforms, three out of ten reviews weren't written by a human. Do you feel something is off online? It's not paranoia. In 2025, it's a reality.

Freelancers 2025 in media and advertising. Useme report

Krzysztof Fiedorek

The modern media and communication market presents entirely new challenges for independent creators. Traditional services are giving way to more complex forms of messaging. The most popular industries in which Polish freelancers operate focus on companies' online presence and visual content.

Why do we believe fakes? Science reveals the psychology of virals

KFi

Why do emotions grab more attention than evidence, and why can a fake authority overshadow scientific data? Researchers from Warsaw University of Technology, Jagiellonian University, and SWPS University in Poland sought the answers. Here are their findings.